AI Assistant in Customer Service with RAG System

When Experienced Minds Leave, Knowledge Leaves with Them

RATIONAL is the world market leader for thermal food preparation in professional kitchens. The company's appliances are found in professional kitchens worldwide. An essential part of its value proposition is the ChefLine: chefs receive telephone advice on appliances and recipes — hands-on, expert-level, in real time.

But the skilled labor shortage didn't stop at customer service. Long-tenured support staff held extensive experiential knowledge that existed in no database. There was a lack of qualified successors to pass it on to. At the same time, the installed appliance base was growing — and with it, the volume of inquiries.

The knowledge that made the ChefLine so valuable was scattered across thousands of sources: operating manuals for different appliance generations, recipe databases, service handbooks, videos, internal documentation. No single employee could oversee it all. And no conventional FAQ system could capture the breadth and depth of the inquiries.

RATIONAL recognized: digital technologies can secure customer service long-term. But how exactly? In the search for concrete use cases, PLAN D entered the picture. The brief: develop an MVP in 100 days that demonstrates how AI can make the company's expertise accessible for support.

Heading

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat.

From Implicit Knowledge to AI Assistant — in 100 Days

Identifying potential

Before a single line of code was written, we analyzed the situation. What issues do callers raise? Which languages are used? How are customers and appliances identified? What data exists, what's missing? In workshops, we evaluated potential use cases by technical feasibility, data availability, and economic viability.

The decision was made for an AI assistant for customer service: a system that can independently answer recurring inquiries and provide targeted relief for the service team.

Structuring and unlocking knowledge

The biggest challenge was not the language model, but the data. Recipes, operating manuals, technical specifications, and service documentation existed in a wide variety of formats and systems. Our data team systematically unlocked, cleaned, and consolidated these sources into a unified knowledge pool — the foundation for the entire system.

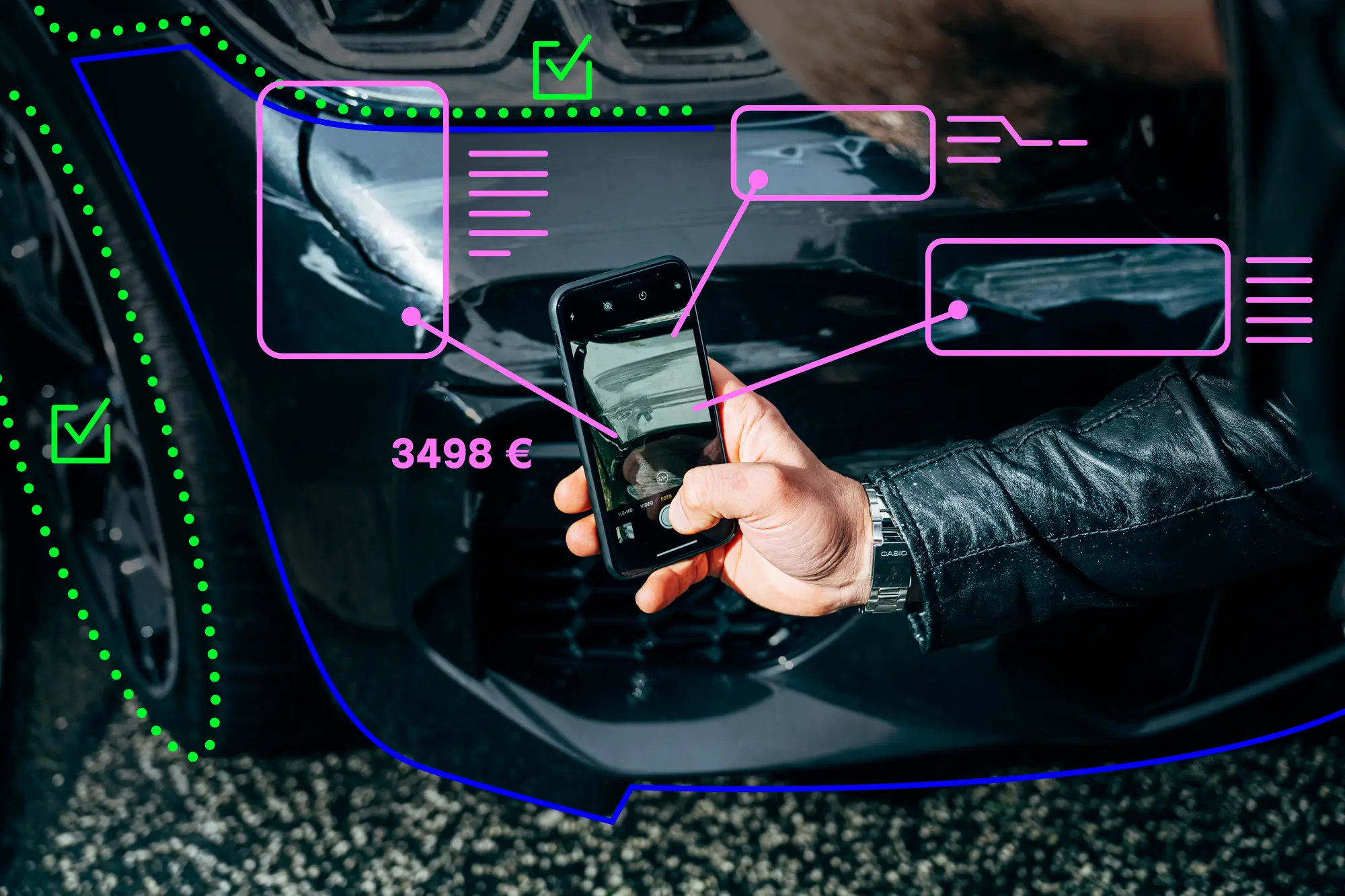

RAG system on proprietary company data

The core of the solution is a RAG system (Retrieval Augmented Generation). Instead of training a language model on RATIONAL's data, RAG takes a different approach: documents are broken down into semantic units, stored as vectors in a database, and searched specifically with each query. The language model generates its answer exclusively based on the text passages found — not from its general training knowledge.

The result: the AI assistant answers accurately and based on verified company sources. Whether “How do I adjust the display brightness?” or “What's the best cooking process for brownies?” — the system finds the relevant information in the knowledge base and formulates a comprehensible answer.

Appliance recognition via IoT

Via RATIONAL login or QR codes on the appliances, the system automatically recognizes hardware version and software build. This allows the AI assistant to respond with appliance-specific answers without users needing to enter their device data manually.

From Idea to Digital Colleague

After 100 days, a functional MVP was ready: an AI assistant that can answer both predefined service scenarios and freely formulated questions. The system combines RATIONAL's consolidated expertise with the linguistic competence of a large language model — precise in content and natural in dialogue.

The strategic value extends beyond the MVP. The organization's previously implicit knowledge has been transferred into a digital, searchable knowledge base. When experienced employees leave, their knowledge is preserved. At the same time, the solution enables scaling of customer service that would be unachievable with telephone support alone.

RATIONAL used the MVP as a proof of concept to convince internal stakeholders and substantiate the decision for further development. In a roadmap, we outlined additional development stages, potential analyses, and cost estimates.

Zahlen & Fakten

100

2.402

3.660

2.692

So haben wir es umgesetzt

Stimmen zum Projekt

Chatbot for Customer Service Deployment

From Idea to AI Colleague

A Practical Example: From Idea to Digital Colleague

AI Application in 100 Days: RATIONAL and PLAN D Show How It's Done

Digital Colleague for the ChefLine: How AI Strengthens Customer Service

FAQs

At RATIONAL, the ChefLine answered telephone questions about professional kitchen appliances and recipes. A service that was heavily dependent on the experiential knowledge of individual employees.

The RAG system now handles part of these tasks: it searches RATIONAL's entire knowledge base, including operating manuals, recipes, and service documentation, and formulates well-founded answers in natural language.

Recurring standard inquiries are answered directly by the AI assistant. More complex cases continue to go to the service team. This provides targeted relief for qualified employees, who can focus on demanding issues.

In the RATIONAL project, one central challenge was front and center: the expertise of ChefLine employees existed predominantly in their heads, not in a database.

Our approach: all available sources, including recipes, operating manuals for different appliance generations, service handbooks, and videos, were systematically unlocked, cleaned, and consolidated into a unified knowledge base. The RAG system now makes this knowledge accessible through natural language queries.

The decisive effect: when experienced employees leave the company, their knowledge remains in the system and available.

A generic large language model (LLM) generates answers based on statistical probabilities from its training knowledge. That sounds convincing but can be wrong in detail, because the model has no knowledge of specific RATIONAL products, recipes, or service processes.

A RAG system solves exactly this problem: with every query, it draws on the actual company data, including operating manuals, recipes, and service documentation. The language model formulates its answer exclusively based on these verified sources. The result is factually correct answers rather than plausible-sounding guesses.

In concrete terms: when a chef asks about the right cooking level for a steak or a technician about the right cleaning tablet, the AI assistant delivers an answer based on genuine RATIONAL product data.

At the same time, the system addresses the skilled labor shortage in technical service. Experiential knowledge that previously existed only in the minds of individual employees becomes digitally accessible: for customers directly and for new service staff as a knowledge resource. Less dependency on individual knowledge holders, faster onboarding, and a customer service that delivers quality even with a smaller team.

The AI assistant for RATIONAL is based on the RAG principle (Retrieval Augmented Generation). In the first step, all relevant documents (recipes, operating manuals, service documentation) are broken down into semantic units and stored as vectors in a database.

When a user asks a question, the system searches for the most relevant text passages and passes them to a large language model. The language model generates its answer exclusively based on these retrieved sources, not from general training knowledge. This keeps answers factually correct and traceable.

AI projects in customer service often fail due to lengthy planning phases and oversized requirement catalogs. At RATIONAL, we deliberately chose the opposite approach: develop an MVP in 100 days that concretely demonstrates what AI can deliver.

The advantage: instead of spending months discussing concepts in meetings, decision-makers had a functioning system in hand after 100 days. Complete with real user experience, measurable results, and a solid basis for the decision on further development.

This is precisely what tipped the scales at RATIONAL to convince internal stakeholders.

In the RATIONAL project, we unlocked data from very diverse sources: recipes, operating manuals for different appliance generations and operating systems, service handbooks, video documentation, and internal databases.

The challenge lay less in the volume than in the heterogeneity — different formats, languages, and structures. Our data team systematically cleaned, standardized, and consolidated these sources into a unified knowledge pool.

In principle, all text-based data sources are suitable for RAG systems: PDFs, wikis, ticketing systems, email archives, product data sheets, or FAQ collections.

Bereit wenn Sie es sind

Zukunft beginnt, wenn menschliche Intelligenz künstliche Intelligenz entwickelt. Der erste Schritt ist nur ein Klick.

Zukunft beginnt, wenn menschliche Intelligenz künstliche Intelligenz entwickelt. Der erste Schritt ist nur ein Klick.